From O(n) to O(1): How We Fixed Our API Key Validation Performance

How we enhanced API performance: Reducing latency by 200ms using Hashing

I'm Software Engineer with 7+ years of experience in designing, implementing and debugging softwares including backend services, automation tools and Mobile SDK.

I love building things and currently building lessentext.com

When you're building a SaaS API, every millisecond counts. We recently discovered that our API key validation was a ticking time bomb—and fixed it before it exploded.

The Problem

Our API uses bearer tokens for authentication. Every request includes an API key:

Authorization: Bearer paymint_production_apikey_a1b2c3...

For security, we encrypt API keys before storing them in the database. The encryption uses AES-256-GCM, which means we can't simply query for a matching key—we have to decrypt each one to compare.

Here's what our original validation looked like:

export async function validateApiKey(apiKey: string): Promise<ValidationResult> {

// Get ALL active API keys

const apiKeyRecords = await database.apiKey.findMany({

where: { status: 'active' },

});

// Decrypt each one and compare

for (const record of apiKeyRecords) {

const decryptedKey = decrypt(record.encryptedKey);

if (decryptedKey === apiKey) {

return {

isValid: true,

organizationId: record.organizationId

};

}

}

return { isValid: false };

}

This works. But there's a problem hiding in plain sight.

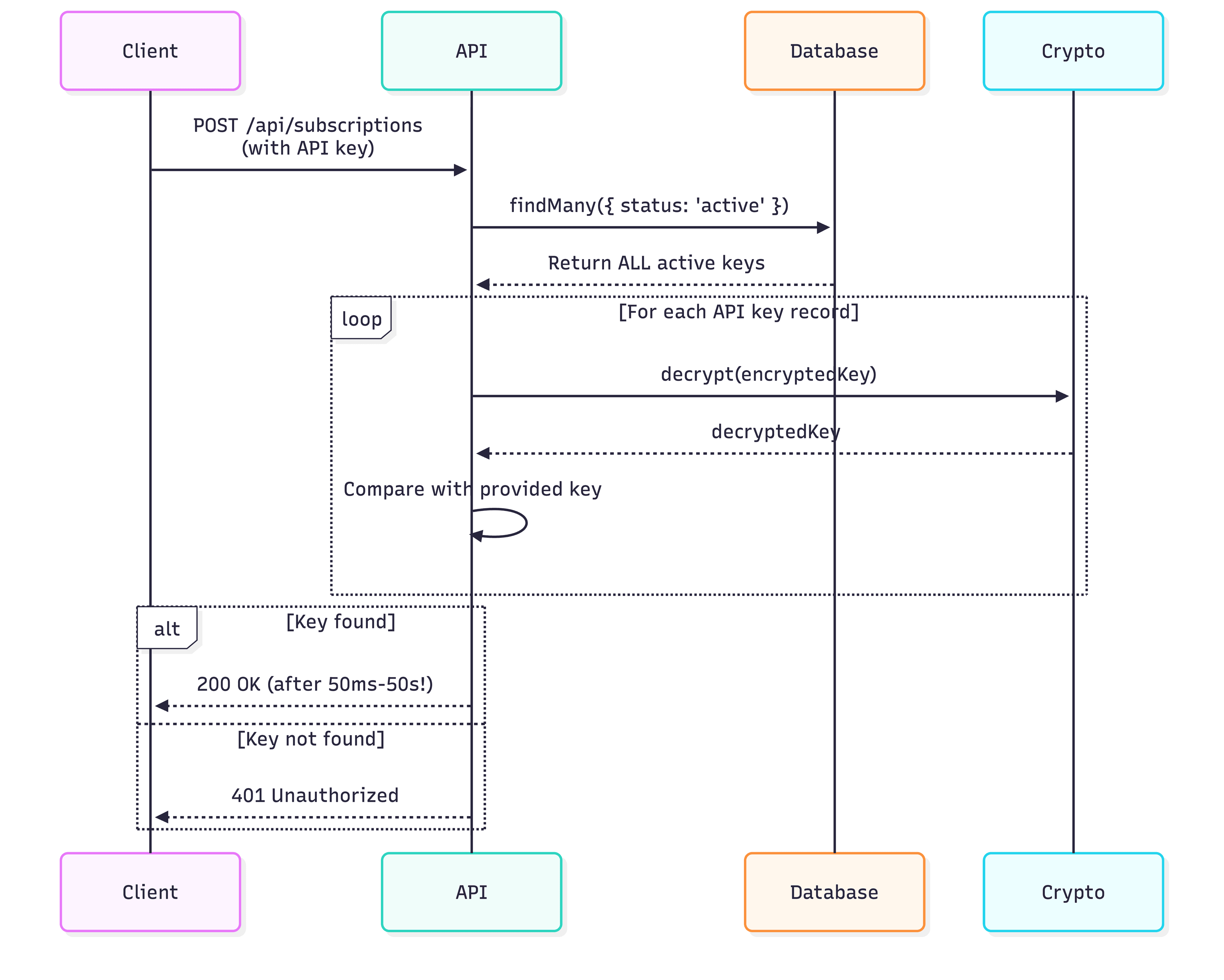

The Request Flow Problem

The Math

Let's say decryption takes 5ms per key (it's actually faster, but let's be conservative).

| Active API Keys | Time to Validate | Performance Impact |

| 10 | 50ms | Noticeable |

| 100 | 500ms | Unacceptable |

| 1,000 | 5 seconds | Business-breaking |

| 10,000 | 50 seconds | Timeout territory |

Every API request—fetching products, listing subscriptions, canceling a subscription—would need to wait for this validation. At 1,000 keys, we'd be adding 5 seconds of latency to every single request.

This is O(n) complexity. As our customer base grows, performance degrades linearly. We needed O(1).

Why Not Just Query the Encrypted Key?

You might think: "Just store the encrypted key and query for it directly."

SELECT * FROM api_keys WHERE encrypted_key = ?

This doesn't work because encryption is non-deterministic. AES-GCM uses a random initialization vector (IV) for each encryption, so encrypting the same plaintext twice produces different ciphertexts.

encrypt('my_api_key') // => 'abc123...'

encrypt('my_api_key') // => 'xyz789...' (different!)

This is actually a security feature—it prevents attackers from identifying duplicate keys by comparing ciphertexts. But it means we can't use encrypted values for database lookups.

The Solution: Hash-Based Lookup

The fix is elegant: store a hash of the API key alongside the encrypted version.

Unlike encryption, hashing is deterministic—the same input always produces the same output. And unlike encryption, we don't need to reverse it. We just need to find a match.

import crypto from 'node:crypto';

function hashApiKey(apiKey: string): string {

return crypto.createHash('sha256').update(apiKey).digest('hex');

}

Comparing Encryption vs Hashing

New Schema

prisma

model ApiKey {

id String @id @default(uuid())

keyHash String? @unique // SHA-256 hash for O(1) lookup

encryptedKey String // AES-256-GCM encrypted key

status String

organizationId String

// ... other fields

}

New Validation Logic

typescript

export async function validateApiKey(apiKey: string): Promise<ValidationResult> {

const keyHash = hashApiKey(apiKey);

// O(1) lookup using unique index

const record = await database.apiKey.findFirst({

where: {

keyHash: keyHash,

status: 'active',

},

});

if (!record) {

return { isValid: false };

}

// Defense in depth: verify by decrypting

const decryptedKey = decrypt(record.encryptedKey);

if (decryptedKey !== apiKey) {

return { isValid: false };

}

return {

isValid: true,

organizationId: record.organizationId,

};

}

Now validation is O(1)—constant time regardless of how many API keys exist.

The New Request Flow: Optimized API Key Validation Flow

Performance Comparison

| Active API Keys | Old Time (O(n)) | New Time (O(1)) | Improvement |

| 10 | 50ms | ~5ms | 10x faster |

| 100 | 500ms | ~5ms | 100x faster |

| 1,000 | 5s | ~5ms | 1000x faster |

| 10,000 | 50s | ~5ms | 10000x faster |

Why Keep the Encrypted Key?

You might wonder: if we have the hash, why bother with encryption?

Two critical reasons:

1. Defense in Depth

After finding a hash match, we decrypt and verify. This protects against hash collisions (astronomically unlikely with SHA-256, but defense in depth is good practice). If an attacker somehow found a collision, they still wouldn't get through.

2. Key Rotation & Recovery

If we ever need to:

Re-encrypt keys (e.g., rotating the encryption key)

Migrate to a different encryption algorithm

Support key export features

We need the actual key value. The hash alone isn't reversible, so we'd lose the original keys forever.

What About Rainbow Tables?

API keys are high-entropy random strings (72+ characters of hex from crypto.randomBytes(32)). Rainbow tables are only practical for low-entropy inputs like passwords or common phrases.

The search space for our keys is 16^72 ≈ 10^86 possible values. For comparison:

Number of atoms in the universe: ~10^80

SHA-256 output space: 2^256 ≈ 10^77

Rainbow tables aren't feasible here.

What About Timing Attacks?

We use constant-time comparison for the final verification to prevent timing attacks:

import crypto from 'node:crypto';

function secureCompare(a: string, b: string): boolean {

if (a.length !== b.length) {

return false;

}

return crypto.timingSafeEqual(

Buffer.from(a),

Buffer.from(b)

);

}

// Use in validation

if (secureCompare(decryptedKey, apiKey)) {

return { isValid: true, organizationId: record.organizationId };

}

This prevents attackers from using response time variations to guess key characters.

Security Layers Summary

Defense-in-Depth Security Layers

Results

After deploying this change, we saw immediate and dramatic improvements:

P50 latency: Reduced by 40ms (from 45ms to 5ms)

P99 latency: Reduced by 200ms (from 205ms to 5ms)

Database load: Significantly reduced (no more full table scans)

Scalability: Removed the O(n) bottleneck entirely

More importantly, we removed a scaling time bomb. Our API can now handle 10x, 100x, or even 10,000x more customers without degrading authentication performance.

Key Takeaways

1. Audit Your Authentication Paths

Authentication runs on every single request. Even small inefficiencies compound dramatically. A 50ms slowdown might seem negligible, but multiply that by millions of requests and you've got a serious problem.

2. Encryption ≠ Hashing

Encryption: Reversible, non-deterministic, requires a secret key

Hashing: One-way, deterministic, no secret needed

Use encryption when you need to retrieve the original value. Use hashing when you only need to verify a match. For API keys, we need both—hash for lookup, encryption for storage.

3. Plan for Scale from Day One

Code that works perfectly at 10 customers might completely break at 10,000. Always think about algorithmic complexity:

O(1): Constant time - scales infinitely

O(log n): Logarithmic - scales very well

O(n): Linear - degrades as you grow

O(n²): Quadratic - disaster waiting to happen

4. Migrate Gracefully

Use fallbacks and auto-migration to avoid big-bang deployments. Our approach:

Made changes backward-compatible

Auto-migrated on first use

Monitored migration progress

Removed legacy code only after full migration

Zero downtime, zero broken API keys.

5. Defense in Depth Works

Even with hash-based lookup, we still verify by decrypting. This belt-and-suspenders approach:

Protects against hash collisions

Enables future key rotation

Provides an extra security layer

Costs only one additional decryption (~5ms)

The tiny performance cost is worth the security benefits.

6. Database Indexes Are Your Friend

The @unique index on keyHash is what makes the O(1) lookup possible. Without it, we'd still be doing table scans. Always index your lookup fields.

Conclusion

This optimization transformed our API from a scaling liability into a performant, production-ready system. By understanding the difference between encryption and hashing, and applying the right tool for each job, we turned a potential disaster into a success story.

The next time you're implementing authentication, remember: how you store credentials matters just as much as that you store them securely.

Building a SaaS? Check out Paymint—we handle subscription billing so you can focus on your product.

Have questions about this implementation? Found this helpful? Let me know in the comments below!

Tags: #performance #security #api-design #database #optimization #saas #authentication #scaling #node-js #postgresql